我在LlamaIndex和LangChain结构学习中,都有玩过RAG。最近预备开个RAG进阶系统,一同学习AI, 一同生长。欢迎点赞,留言交流。

前言

咱们一同来学习LlamaIndex功用更完善的多文档RAG,大家能够参照官方文档来对比学习。

Advanced RAG – LlamaIndex Multi-Doc Agent

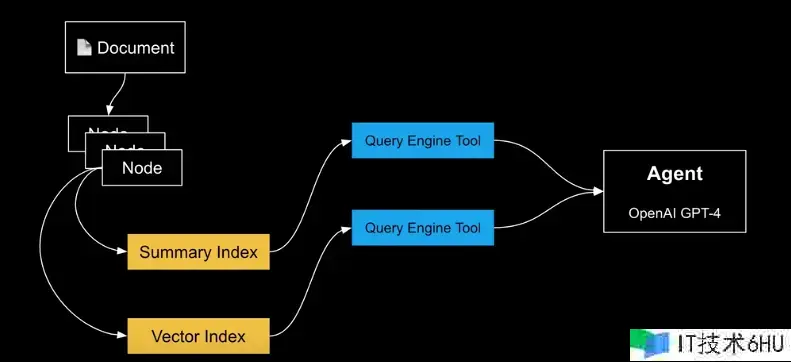

- 单个文档RAG Agent流程

咱们结合上图来了解一下文档的处理流程。Document便是文档,它会被解析成多个数据节点(Node),类似RAG 进阶 半结构化数据中element的概念。LlamaIndex确真实文档RAG这块有它的优势,在这块LangChain能够将阵地交给它。

当文档被分割为节点后,咱们开端创建索引。索引包括Summary Index和Vector Index。摘要索引是每个节点的摘要,每个节点都有摘要索引,便利根据摘要进行检索。Vector Index是对文档的索引和向量存储。

有了索引,咱们就能够根据LlamaIndex提供的工具封装成Query Engine Tool,方式是函数。

最后GPT-4根据情况调用相应的Query Engine Tool 函数, 完成作业。咱们就为用户提供了文档RAG检索的Agent(署理)。

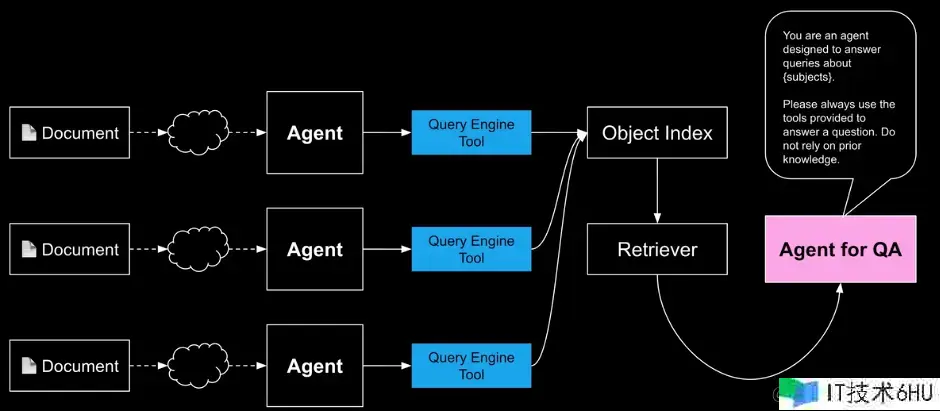

- 多个文档RAG Agent 流程

现在咱们有多份文档,每份文档根据上面的流程会被包装成独立的Agent。

在上图中,每个Query Engine Tool由一个叫Object Index的对象索引来办理。Object Index 了解每个Query Engine Tool的内容,届时它知道应该调用哪个文档。有了Object Index 咱们就能够实例化Retriever, 生成Agent For QA,结合LLM一同work。

咱们看下QA Agent的prmpt。他告知LLM, 总是使用Object Index办理的Query Engine Tools答复问题。

实战

在这个例子中,咱们会使用到一下LlamaIndex的组件

- VectorStoreIndex 文本数据的向量化做的存储

- SummaryIndex 文本摘要信息做的索引

- ObjectIndex 对QueryEngineTool和Agent进行的索引

- QueryEngineTool 查询引擎工具的完成类

- OpenAIAgent openai 署理

- FnRetrieverOpenAIAgent 检索器署理

Let’s coding!

- 装置LlamaIndex

!pip install llama-index -q -U

- 预备openai

import os

import openai

os.environ["OPENAI_API_KEY"]="your valid openai api key"

openai.api_key = os.environ["OPENAI_API_KEY]

- LlamaIndex 组件引入

from llama_index import(

VectorStoreIndex,

SummaryIndex,

SimpleDirectoryReader,

ServiceContext

)

from llama_index.tools import QueryEngineTool, ToolMetaData

from llama_index.llms import OpenAI

- 文档

咱们使用的是欧洲五大联赛维基百科的内容,多文档。RAG要完成的功用能答复五大联赛相关的问题,而且不仅仅是单个联赛,还能够针对多个联赛答复。比如: 请比较英超联赛和西甲联赛的历史,以及在冠军杯的表现。非常等待跨多个文档检索,再合成…

wiki_titles = [

"Serie A",

"Premier League",

"Bundesliga",

"La Liga",

"Ligue 1"

]

#下载文档

from pathlib import Path

import requests

for title in wiki_titles:

response = requests.get(

"https://en.wikipedia.org/w/api.php",

params={

"action":"query",

"format":"json",

"titles": title,

"prop":"extract",

"explaintext":True,

},

).json()

page = next(iter(response["query"]["pages"].values()))

wiki_text = page['extract']

data_path=Path["data"]

if not data_path.exists():

Path.mkdir(data_path)

with open(data_path / f"{title}.txt", "w") as fp:

fp.write(wiki_text)

- 加载文档

leagues_docs = {}

for wiki_title in wiki_titles:

leagues_docs[wiki_title] = SimpleDirectoryReader(

input_files=[f"data/{wiki_title}.txt"]

).load_data()

# gpt-3.5-turbo对文本处理已经足够了

llm = OpenAI(temperature=0, model="gpt-3.5-turbo")

service_context = ServiceContext.from_defaults(llm=llm)

- 构建summarize索引和Vector索引

# OpenAI署理

from llama_index.agent import OpenAIAgent

from llama_index import load_index_from_storage, StorageContext

# 分割器

from llama_index.node_parser import SentenceSplitter

import os

# 实例化分割器

node_parser = SentenceSplitter() # Build agents dictionary

# agents dict

agents = {}

# 查询引擎 dict

query_engines = {} # this is for the baseline

# 一切的节点

all_nodes = []

for idx, wiki_title in enumerate(wiki_titles):

nodes = node_parser.get_nodes_from_documents(leagues_docs[wiki_title])

all_nodes.extend(nodes)

if not os.path.exists(f"./data/{wiki_title}"):

# build vector index

vector_index = VectorStoreIndex(nodes,

service_context=service_context)

vector_index.storage_context.persist(

persist_dir=f"./data/{wiki_title}"

)

else:

vector_index = load_index_from_storage( StorageContext.from_defaults(persist_dir=f"./data/{wiki_title}"), service_context=service_context, )

summary_index = SummaryIndex(nodes, service_context=service_context)

vector_query_engine = vector_index.as_query_engine()

summary_query_engine = summary_index.as_query_engine()

query_engine_tools = [ QueryEngineTool( query_engine=vector_query_engine, metadata=ToolMetadata( name="vector_tool", description=( "Useful for questions related to specific aspects of" f" {wiki_title} (e.g. the history, teams " "and performance in EU, or more)." ), ), ), '

QueryEngineTool( query_engine=summary_query_engine, metadata=ToolMetadata( name="summary_tool", description=( "Useful for any requests that require a holistic summary" f" of EVERYTHING about {wiki_title}. For questions about" " more specific sections, please use the vector_tool." ), ), ), ]

# build agent

function_llm = OpenAI(model="gpt-4")

agent = OpenAIAgent.from_tools(

query_engine_tools,

llm=function_llm,

verbose=True,

system_prompt=f""" You are a specialized agent designed to answer queries about {wiki_title}. You must ALWAYS use at least one of the tools provided when answering a question; do NOT rely on prior knowledge. """, )

agents[wiki_title] = agent

query_engines[wiki_title] = vector_index.as_query_engine( similarity_top_k=2 )

- tools

all_tools = [] for wiki_title in wiki_titles:

wiki_summary = ( f"This content contains Wikipedia articles about {wiki_title}. Use" f" this tool if you want to answer any questions about {wiki_title}.n" )

doc_tool = QueryEngineTool( query_engine=agents[wiki_title],

metadata=ToolMetadata( name=f"tool_{wiki_title.replace(' ', '_')}",

description=wiki_summary, ), )

all_tools.append(doc_tool)

- objct index

from llama_index import VectorStoreIndex

from llama_index.objects import ObjectIndex, SimpleToolNodeMapping

tool_mapping = SimpleToolNodeMapping.from_objects(all_tools)

obj_index = ObjectIndex.from_objects( all_tools, tool_mapping, VectorStoreIndex, )

- retriever agent

from llama_index.agent import FnRetrieverOpenAIAgent

top_agent = FnRetrieverOpenAIAgent.from_retriever(

obj_index.as_retriever(similarity_top_k=3),

system_prompt=""" You are an agent designed to answer queries about the European top football leagues. Please always use the tools provided to answer a question. Do not rely on prior knowledge. """, verbose=True, )

- 查询

from llama_index.agent import FnRetrieverOpenAIAgent

top_agent = FnRetrieverOpenAIAgent.from_retriever( obj_index.as_retriever(similarity_top_k=3), system_prompt=""" You are an agent designed to answer queries about the European top football leagues. Please always use the tools provided to answer a question. Do not rely on prior knowledge. """, verbose=True, )

- 打印结果

La Liga has a rich history dating back to the 1930s. During the early years, Athletic Club was the dominant team, but in the 1940s, Atltico Madrid, Barcelona, and Valencia emerged as strong contenders. The 1950s saw the rise of FC Barcelona and the dominance of Real Madrid, which continued into the 1960s and 1970s.

In terms of UEFA Champions League (UCL) performance, La Liga teams have had a significant impact. Real Madrid, Barcelona, and Atltico Madrid have been particularly successful, with multiple UCL titles to their names. Other Spanish clubs like Sevilla and Valencia have also won international trophies. La Liga consistently sends a significant number of teams to the Champions League group stage, showcasing the league's overall strength and competitiveness.

总结

- LlamaIndex 为多文档RAG提供了VectorStoreIndex、SummaryIndex、ObjectIndex、QueryEngineTool、FnRetrieverOpenAIAgent 等丰厚组件,进程杂乱,但井然有序

- 除VectorStoreIndex、SummaryIndex,还有agent 索引, object 索引

- 相应的prompt 规划,让用户的问题,由相应的 tool来查询 相应的索引,最后再合并起来,cool