以下是布置 Raft Kafka 集群的基本步骤:

1)预备 Kubernetes 集群

确保你有一个运行中的 Kubernetes 集群,并且已经装备了 kubectl 命令行工具。 布置教程如下:

2)装置 helm

# 下载包

wget https://get.helm.sh/helm-v3.9.4-linux-amd64.tar.gz

# 解压压缩包

tar -xf helm-v3.9.4-linux-amd64.tar.gz

# 制作软衔接

ln -s /opt/helm/linux-amd64/helm /usr/local/bin/helm

# 验证

helm version

helm help

3)装备 Helm chart

如果你运用 Bitnami 的 Kafka Helm chart,你需求创立一个 values.yaml 文件来装备 Kafka 集群。在该文件中,你能够启用 KRaft 形式并装备其他设置,如认证、端口等。

# 增加下载源

helm repo add bitnami https://charts.bitnami.com/bitnami

# 下载

helm pull bitnami/kafka --version 26.0.0

# 解压

tar -xf kafka-26.0.0.tgz

# 修正装备

vi kafka/values.yaml

以下是一个 values.yaml 的示例装备:

image:

registry: registry.cn-hangzhou.aliyuncs.com

repository: bigdata_cloudnative/kafka

tag: 3.6.0-debian-11-r0

listeners:

client:

containerPort: 9092

# 默许是带鉴权的,SASL_PLAINTEXT

protocol: PLAINTEXT

name: CLIENT

sslClientAuth: ""

controller:

replicaCount: 3 # 控制器的数量

persistence:

storageClass: "kafka-controller-local-storage"

size: "10Gi"

# 目录需求提早在宿主机上创立

local:

- name: kafka-controller-0

host: "local-168-182-110"

path: "/opt/bigdata/servers/kraft/kafka-controller/data1"

- name: kafka-controller-1

host: "local-168-182-111"

path: "/opt/bigdata/servers/kraft/kafka-controller/data1"

- name: kafka-controller-2

host: "local-168-182-112"

path: "/opt/bigdata/servers/kraft/kafka-controller/data1"

broker:

replicaCount: 3 # 署理的数量

persistence:

storageClass: "kafka-broker-local-storage"

size: "10Gi"

# 目录需求提早在宿主机上创立

local:

- name: kafka-broker-0

host: "local-168-182-110"

path: "/opt/bigdata/servers/kraft/kafka-broker/data1"

- name: kafka-broker-1

host: "local-168-182-111"

path: "/opt/bigdata/servers/kraft/kafka-broker/data1"

- name: kafka-broker-2

host: "local-168-182-112"

path: "/opt/bigdata/servers/kraft/kafka-broker/data1"

service:

type: NodePort

nodePorts:

#NodePort 默许范围是 30000-32767

client: "32181"

tls: "32182"

# Enable Prometheus to access ZooKeeper metrics endpoint

metrics:

enabled: true

kraft:

enabled: true

增加以下几个文件:

- kafka/templates/broker/pv.yaml

{{- range .Values.broker.persistence.local }}

---

apiVersion: v1

kind: PersistentVolume

metadata:

name: {{ .name }}

labels:

name: {{ .name }}

spec:

storageClassName: {{ $.Values.broker.persistence.storageClass }}

capacity:

storage: {{ $.Values.broker.persistence.size }}

accessModes:

- ReadWriteOnce

local:

path: {{ .path }}

nodeAffinity:

required:

nodeSelectorTerms:

- matchExpressions:

- key: kubernetes.io/hostname

operator: In

values:

- {{ .host }}

---

{{- end }}

- kafka/templates/broker/storage-class.yaml

kind: StorageClass

apiVersion: storage.k8s.io/v1

metadata:

name: {{ .Values.broker.persistence.storageClass }}

provisioner: kubernetes.io/no-provisioner

- kafka/templates/controller-eligible/pv.yaml

{{- range .Values.controller.persistence.local }}

---

apiVersion: v1

kind: PersistentVolume

metadata:

name: {{ .name }}

labels:

name: {{ .name }}

spec:

storageClassName: {{ $.Values.controller.persistence.storageClass }}

capacity:

storage: {{ $.Values.controller.persistence.size }}

accessModes:

- ReadWriteOnce

local:

path: {{ .path }}

nodeAffinity:

required:

nodeSelectorTerms:

- matchExpressions:

- key: kubernetes.io/hostname

operator: In

values:

- {{ .host }}

---

{{- end }}

- kafka/templates/controller-eligible/storage-class.yaml

kind: StorageClass

apiVersion: storage.k8s.io/v1

metadata:

name: {{ .Values.controller.persistence.storageClass }}

provisioner: kubernetes.io/no-provisioner

4)运用 Helm 布置 Kafka 集群

# 先预备好镜像

docker pull docker.io/bitnami/kafka:3.6.0-debian-11-r0

docker tag docker.io/bitnami/kafka:3.6.0-debian-11-r0 registry.cn-hangzhou.aliyuncs.com/bigdata_cloudnative/kafka:3.6.0-debian-11-r0

docker push registry.cn-hangzhou.aliyuncs.com/bigdata_cloudnative/kafka:3.6.0-debian-11-r0

# 开始装置

helm install kraft ./kafka -n kraft --create-namespace

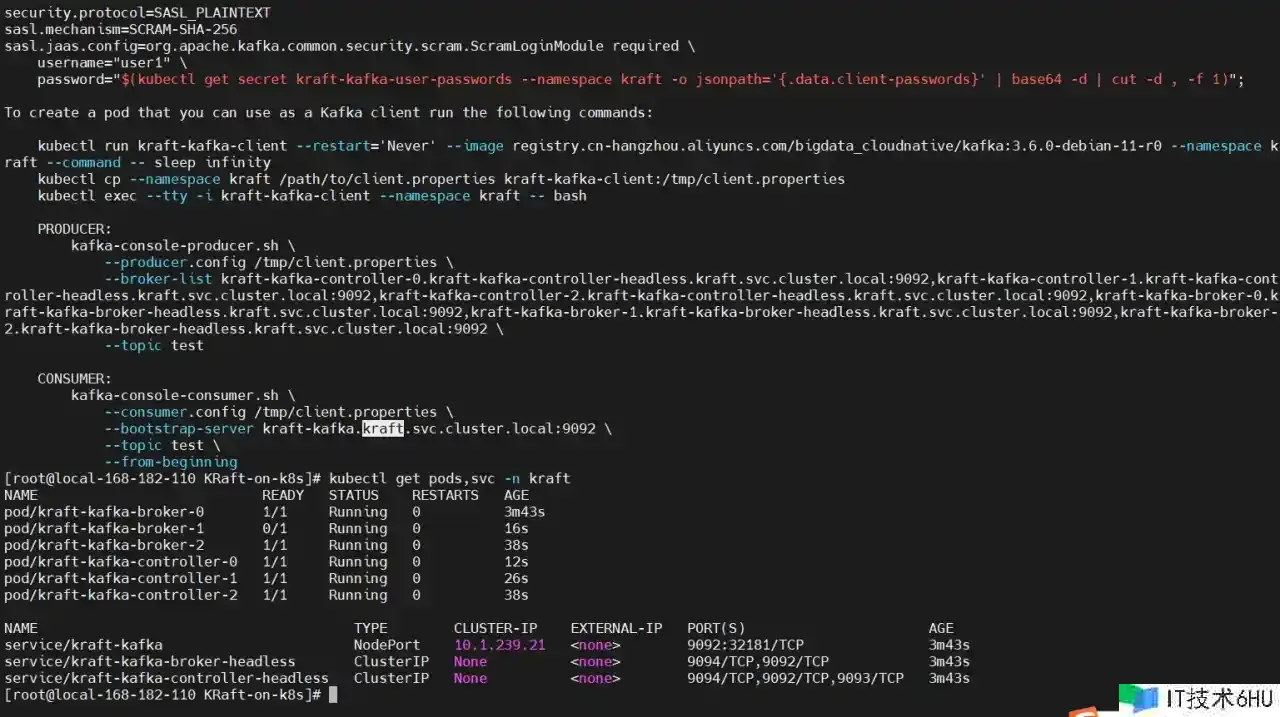

NOTES

[root@local-168-182-110 KRaft-on-k8s]# helm upgrade kraft

Release "kraft" has been upgraded. Happy Helming!

NAME: kraft

LAST DEPLOYED: Sun Mar 24 20:05:04 2024

NAMESPACE: kraft

STATUS: deployed

REVISION: 3

TEST SUITE: None

NOTES:

CHART NAME: kafka

CHART VERSION: 26.0.0

APP VERSION: 3.6.0

** Please be patient while the chart is being deployed **

Kafka can be accessed by consumers via port 9092 on the following DNS name from within your cluster:

kraft-kafka.kraft.svc.cluster.local

Each Kafka broker can be accessed by producers via port 9092 on the following DNS name(s) from within your cluster:

kraft-kafka-controller-0.kraft-kafka-controller-headless.kraft.svc.cluster.local:9092

kraft-kafka-controller-1.kraft-kafka-controller-headless.kraft.svc.cluster.local:9092

kraft-kafka-controller-2.kraft-kafka-controller-headless.kraft.svc.cluster.local:9092

kraft-kafka-broker-0.kraft-kafka-broker-headless.kraft.svc.cluster.local:9092

kraft-kafka-broker-1.kraft-kafka-broker-headless.kraft.svc.cluster.local:9092

kraft-kafka-broker-2.kraft-kafka-broker-headless.kraft.svc.cluster.local:9092

To create a pod that you can use as a Kafka client run the following commands:

kubectl run kraft-kafka-client --restart='Never' --image registry.cn-hangzhou.aliyuncs.com/bigdata_cloudnative/kafka:3.6.0-debian-11-r0 --namespace kraft --command -- sleep infinity

kubectl exec --tty -i kraft-kafka-client --namespace kraft -- bash

PRODUCER:

kafka-console-producer.sh

--broker-list kraft-kafka-controller-0.kraft-kafka-controller-headless.kraft.svc.cluster.local:9092,kraft-kafka-controller-1.kraft-kafka-controller-headless.kraft.svc.cluster.local:9092,kraft-kafka-controller-2.kraft-kafka-controller-headless.kraft.svc.cluster.local:9092,kraft-kafka-broker-0.kraft-kafka-broker-headless.kraft.svc.cluster.local:9092,kraft-kafka-broker-1.kraft-kafka-broker-headless.kraft.svc.cluster.local:9092,kraft-kafka-broker-2.kraft-kafka-broker-headless.kraft.svc.cluster.local:9092

--topic test

CONSUMER:

kafka-console-consumer.sh

--bootstrap-server kraft-kafka.kraft.svc.cluster.local:9092

--topic test

--from-beginning

5)测验验证

# 创立客户端

kubectl run kraft-kafka-client --restart='Never' --image registry.cn-hangzhou.aliyuncs.com/bigdata_cloudnative/kafka:3.6.0-debian-11-r0 --namespace kraft --command -- sleep infinity

创立客户端

kafka-topics.sh --create --topic test --bootstrap-server kraft-kafka-controller-0.kraft-kafka-controller-headless.kraft.svc.cluster.local:9092 --partitions 3 --replication-factor 2

# 查看概况

kafka-topics.sh --describe --bootstrap-server kraft-kafka-controller-0.kraft-kafka-controller-headless.kraft.svc.cluster.local:9092 --topic test

# 删去topic

kafka-topics.sh --delete --topic test --bootstrap-server kraft-kafka-controller-0.kraft-kafka-controller-headless.kraft.svc.cluster.local:9092

生产者和消费者

# 生产者

kafka-console-producer.sh

--broker-list kraft-kafka-controller-0.kraft-kafka-controller-headless.kraft.svc.cluster.local:9092,kraft-kafka-controller-1.kraft-kafka-controller-headless.kraft.svc.cluster.local:9092,kraft-kafka-controller-2.kraft-kafka-controller-headless.kraft.svc.cluster.local:9092,kraft-kafka-broker-0.kraft-kafka-broker-headless.kraft.svc.cluster.local:9092,kraft-kafka-broker-1.kraft-kafka-broker-headless.kraft.svc.cluster.local:9092,kraft-kafka-broker-2.kraft-kafka-broker-headless.kraft.svc.cluster.local:9092

--topic test

# 消费者

kafka-console-consumer.sh

--bootstrap-server kraft-kafka.kraft.svc.cluster.local:9092

--topic test

--from-beginning

6)更新集群

helm upgrade kraft ./kafka -n kraft

7)删去集群

helm uninstall kraft -n kraft

Raft Kafka on k8s 布置实战操作就先到这儿了,有任何疑问也可关注我公众号:大数据与云原生技能分享,进行技能交流,如本篇文章对您有所协助,费事帮忙一键三连(点赞、转发、保藏)~

声明:本站所有文章,如无特殊说明或标注,均为本站原创发布。任何个人或组织,在未征得本站同意时,禁止复制、盗用、采集、发布本站内容到任何网站、书籍等各类媒体平台。如若本站内容侵犯了原著者的合法权益,可联系我们进行处理。