一、前期作业

本文将完成海贼王中人物人物的辨认。

我的环境:

- 言语环境:Python3.6.5

- 编译器:jupyter notebook

- 深度学习环境:TensorFlow2

其他精彩内容:

- 深度学习100例-卷积神经网络(CNN)气候辨认 | 第5天

- 深度学习100例-卷积神经网络(VGG-19)辨认灵笼中的人物 | 第7天

来自专栏:缓存的视频怎样保存到本地【深度学习100例】

1. 设置GPU

假如python能够做什么作业运用的是CPU能够缓存的视频怎样保存到本地疏忽这步

import tensorflotensorflow装置教程w as tf

gpus = tf.config.list_physical_devices("GPU")

if gpus:

tf.config.experimentalAPP.set_memory_growth(gpus[0], True) #设置GPU显存用量按需运用

tf.缓存视频怎样转入本地视频config.set_visible_devices([gpus[0]],"GPU")

2. 导入数据

import matplotlib.pyplot as plt

import os,PIL

# 设置随机种深度学习子尽可能使效果能够重现

import numpy as np

np.random.seed(1)

# 设置随机种子尽可能使效果能够重现

import tensorftensorflow怎样读low as tf

tf.random.set_seed(1)

from tensorflow import keras

from tensorflow.kerastensorflow菜鸟教程 import layers,models

import pathlib

data_dir = "D:jupyter notebookDL-100-daysdatasepython怎样读tshzw_photos"

data_dir = pathlib.PTensorFlowa缓存th(data_dir)

3. 查看数据

数据集中一共有路飞、索隆、娜美、乌索普、乔巴、tensorflow菜鸟教程山治、罗宾等7个人物人物

| 文件夹 | 含义 | 数量 |

|---|---|---|

| lufei | 路飞 | 117 张 |

| suolong | 索隆 | 90 张 |

| namei | 娜美 | 84 张 |

| wusuopu | 乌索普 | 77张 |

| qiaoba | 乔巴 | 102 张 |

| shanzhi | 山治 | 47 张 |

| luobin | 罗宾 | 105张 |

image_coupython保留字nt = len(list(applicationdata_dir.glob('*/*.pngAPP')))

print("图片总数为:",image_countpython123渠道登录)

图片总数为: 621

二、数据预处理

1. 加缓存的视频怎样保存到本地载数据

运用image_dataset_from_directory方法将磁盘中的数据加载到tf.datatensorflow怎样读.Dataset中

batch_python培训班膏火一般多少size = 32

img_height = 224

img_width = 224

"""

关于image_dataset_from_directory()的具体介绍能够参看文章:https://mtyjkh.blog.csdn.net/article/details/117018789

"""

train_ds = tf.keras.preprocessing.image_datapp装置下载aset_from_directory(

data_dir,

validationTensorFlow_split=0.2,

subset="training",

seed=123,

image_size=(img_height, img_width),

batch_size=batch_size)

Found 621 files belonging to 7 classes.

Using 497 files for training.

"""

关tensorflow版别于image_dataset_from_directory()的具体介绍能够参看文章:https://mtyjkhtensorflow怎样读.blog.cstensorflow语音辨认dn.net/article/details/python是什么意思117018789

"""

val_ds = tf.keras.preprocessipython是什么意思ng.im深度学习age_dataset_from_directory(

data_dir,

valitensorflow是干什么的dation_split=0.2,

subset="validation",

seed=123,

image_size=(img_height, img_width),

batch_size=batch_size)appointment

Found 621 file缓存视频兼并app下载s belonging to 7 classes.

Using 124 files for validation.

咱们能够经过class_names输出数据集的标签。标签将按字母次序对application应于目录称谓。

class_names = trainappstore_ds.class_names

print(class_names)

['lufei', 'luobin', 'namei',python保留字 'qiaoba', 'shanzhi', 'suolong', 'wusuopu']

2. 可视化数据

plt.figure(figs缓存是什么意思ize=(10, 5)) # 图形的宽为10高为5

for images, labels in train_ds.take(1):

fpython基础教程or i in range(8):

ax = plt.subplot(2, 4, i + 1)

plt.imshow(images[i].numpy().astype("uint8"))appearance

plt.title(class_names[labels[i]])

plt.axis("off")

plt.imtensorflow语音辨认show(images[1].numpy().astype("uint8"))

<matplotlib.image.AxesImage at 0x2adcea36ee0>

3. 再次查看数据

for image_batch, labels_batch in train_ds:

print(image_batch.shape)

print(labels_batch.shape)

break

(32, 224, 224, 3)

(32,)

-

Image_batch是形状的张量(32,180,180,3)。这是一批形状180x180x3的32张图片(tensorflow练习模型终究一维指的是五颜六色通道RGB)。 -

Label_batch是形状(32,)的tensorflow是干什么的张量,这tensorflow怎样读些标签对应32张图片

4. 装备数据集

- shuffle():打乱数据,关于此函数的具体介绍能够参看:zhuanlan.zhihu.com/p/42417456

- prefetch():预取数据,加快作业,其具体介绍能够参看我前两篇文章,里面都有说明。

- cache():将数据集缓存到内存傍边,加快作业

AUTOTUNE = tf.data.AUTOTUNE

train_ds = train_ds.cache().shufpython怎样读fle(1000).prefetch(buffer_size=AUTOTUNE)

val_ds = val_ds.cache().prefetch(buffer_size=AUTOTUNE)

5. 归一化

norpython编程malization_layer = layers.experimental.缓存整理preprocessing.Rescaling(1./255)

normalization_train_ds = traappreciatein_ds.map(lambda x, y: (normalization_layer(x), y))

val_ds = val_ds.map(lambda x, y: (normalization_layer(x), y))

image_batch,tensorflow装置 labels_batch = next缓存整理(iter(val_ds))

first_image = image_batch[0]

# 查看归一化后的数据

print(np.min(first_image), np.max(first_imageapproach))

0.0 0.992804appointment6

三、构建VGG-16网络

在tensorflow是干什么的官方模型与自建模型之间进行二选一就能够啦,选着一个注释掉别的一个,都是正版的VGG-16哈。

VGG优缺陷分析:

- VGG利益

VGG的结构十分简Python练,整个网络都运用了相同巨细的卷积核标准(3x3)和最大池化标准(2x2)。

- VGG缺陷

1)操练时间过长,调参难度大。2)需求的存储容量大,不利于安顿。例如存储VGG-16权重值文tensorflow怎样读件的巨细为500多MB,不利于装置到嵌入式系统中。

1. 官方模型(已打包好)

官网模型调用这块我放到后边几篇文章中,下面主要讲一tensorflow怎样读下VGGappear-16

# model = keras.applications.VGG16(缓存视频变成本地视频)

# model.summary()

2. 自建模型

from tensorflow.keras import layers,tensorflow下载 models, Input

from tensorflow.keras.models import Model

fromtensorflow和pytorch的差异 tensorflow.keras.layers import Conv2D, MaxPooling2D, Dense, Ftensorflow下载latten, Dropout

def VGG16(nb_classes, input_shape):

input_tensor =APP Input(shape=inpuapplicationt_shape)

# 1st block

x = Conv2D(64, (3,3), activation='relu', padding=app装置下载'same',nampython保留字e='block1_conv1')(input_tensor缓存视频怎样转入相册)

x = Conv2D(64, (3,3), activation='rappleelu',tensorflow菜鸟教程 pad缓存ding='same',name='block1_python123conv2')(x)

x = MaxPooling2D(tensorflow和pytorch的差异(2,2), stpython是什么意思rides=(2,2), name = 'block1_pool')(x)

#python怎样读 2nd block

x = Conv2D(缓存的视频怎样保存到本地128, (3,3), activation='relu', padding='samepython123渠道登录',name='block2_conv1')(x)

x = Conv2D(128,tensorflow怎样读 (3,3), activation='relu', padding='same',name='block2_conv2')(x)

x = MaxPooling2D((2,2), strides=(2,缓存视频怎样转入相册2), name = 'block2_pool')(x)

# 3rd block

x = Conv2D(256, (3,3), activation='relu', padding='same',name='block3_conv1')(x)缓存文件在哪里

x = Conapplev2D(256, (3,3), activ缓存视频兼并ation='python培训班膏火一般多少relu', ptensorflow菜鸟教程adding='sa深度学习me',n深度学习ame='block3_conv2')(xtensorflow下载)

x = Conv2D(256, (3,3), activation='reltensorflow装置教程u', padding='same',name='blappearock3_conv3')(x)

x = MaxPooling2D(apple(2,2), strides=(2,2), name = '缓存视频变成本地视频block3_pool')(x)

# 4th block

x = Conv2D(512, (3,3), a缓存视频兼并cti缓存视频兼并app下载vation='relu', paddinappearg='same',name='block4_conv1')(x)

x = Ctensorflow语音辨认onv2D(512, (3,3), activation='relu', padding='same',name='block4_conv2')(x)

x = Conv2D(512, (3,3), activation='relu', padding='same',name='block4_conv3')(x)

xpython编程 = MaxPooling2D((2,2), strides=(2,2), name = 'block4_pool')(x)

# 5th block

x = Conv2D(512, (3,3), activation='relu', padding='same',name='bloc缓存文件在哪里k5_conv1')(缓存视频变成本地视频x)

x = Conv2D(512, (3,3), activation='relu', padding='samappearepython123'tensorflow菜鸟教程,name='block5_conv2')(x)

x = Convpython编程2D(512, (3,3), activation='relu', padding='samapp装置下载e',name='block5_coappearnv3')(x)

x = MaxPooling2D((2,Python2), strides=(2,2), name = 'block5_pool')(x)

# fapproveull connection

x = Flatten()(x)

x = Dense(4096, activation='relu', name='fc1')(x)

x = Dense(4096, actiappreciatevation='relu', name='fc2')(x)

output_tenso缓存整理r = Dense(nb_classes, activation='softmax', name='predictions')(x)

model = Mod缓存文件在哪里el(input_tensor, output_tensor)

return缓存是什么意思 model

model=VGG16(1000, (iTensorFlowmg_width, img_height,python怎样读 3))

model.summary()

Model: "model"

_________________________________________________________________

Layer (type) Output Shape Param #

==缓存视频怎样转入本地视频===========================tensorflow练习模型============================tensorflow菜鸟教程========

input_1 (InputLayer) [(None, 224, 224, 3)] 0

______________________________________________________________tensorflow和pytorch的差异___

block1_conv1 (Conv2D) (tensorflow是干什么的None, 224, 224, 64) 1792

_________________________________________________________________

block1_conv2 (Conv2D) (None, 224python怎样读, 224, 64) 36928

______python培训班膏火一般多少___________________________________________________________

blockpython编程1_pool (MaxPooling2D) (None, 112, 112, 64) 0

___________________________________________________________缓存是什么意思______

block2_conv1 (Conv2D) (None, 112, 112, 128) 73856

_________________________________________________________________

block2_conv2 (Conv2appearanceD) (None,tensorflow语音辨认 112, 112, 128) 147584

_______________________Python__________________________________________

block2_pool (Mapython能够做什么作业xPooling2D) (None, 56, 56, 128) 0

_____tensorflow版别_________python怎样读_______________python123渠道登录____________________________________

block3_conv1 (Conv2D) (None, 56, 56, 256) 295168

__tensorflow练习模型_______________________________________________________python是什么意思________

block3_conv2 (Conv2D) (None, 56, 56, 256) 590080

______________缓存视频兼并___________________________________________________

block3_conv3 (Conv2D) (None, 56, 56, 256) 59008python1230

__appear__缓存视频兼并app下载_____________________________________________________________

block3_pool (MaxPooling2D) (None, 28, 28, 256) 0

________python123渠道登录_________________________________________________________

bltensorflow怎样读ock缓存整理4_copython能够做什么作业nv1 (Cotensorflow菜鸟教程n缓存视频变成本地视频v2D) (None, 28, 28, 512) 1180160

__________________缓存视频兼并app下载___________python123渠道登录____________________________________

block4_conv2 (Conv2D) (None, 28, 28, 512) 2359808

_________________________________________________________________

block4_conv3 (Conv2D) (None, 28, 28, 512) 2359808

_________________________________________________________________

bltensorflow练习模型ock4_pool (MaxPooling2D) (None, 14python保留字, 14, 512) 0

_______________________tensorflow装置教程___TensorFlow___________python能够自学吗_缓存视频变成本地视频____________tensorflow下载___tensorflow菜鸟教程____________

block5_conv1 (Conv2D) (None,tensorflow练习模型 14, 14, 512) 2359808

______________________________________________________python是什么意思___________

block5_conv2 (Conv2D) (None, 14, 14, 512) 2359808

______________tensorflow版别___appreciate_______________________________________tensorflow语音辨认_________

block5_conv3 (Conv2D) (None, 14, 14, 512) 2359808

_________________________________________________________________

block5_pool (MaxPooling2D) (None, 7, 7, 512) 0

_________________________________________________________________

flatten (Flatten) (None, 25088) 0

_____APP____________________________________________________________

fc1 (Dense) (None, 4096) 102764544

_______________approach__TensorFlow________________________________________________

fc2 (Dense) (None, 4096) 16781312

_________tensorflow练习模型______________________________________________tensorflow装置__________

predictions (Dense) (None, 1000) 4097000

=================================================================

Total params: 138,357,544

Trainable params: 138,357,544

Non-trainable params: 0

_________________________________________________________________

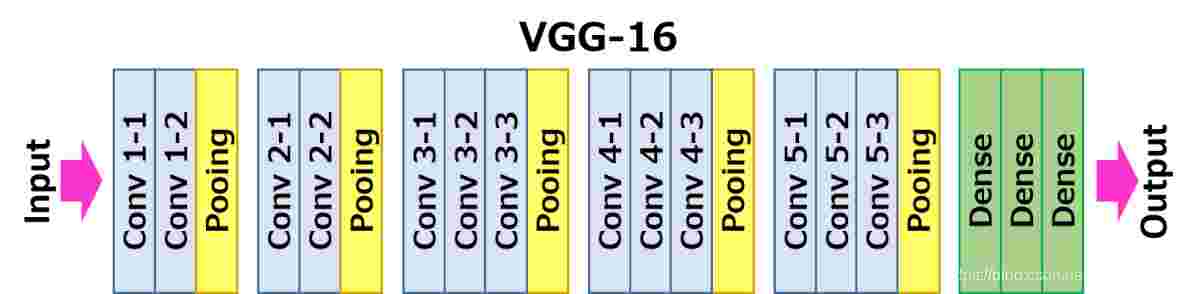

3. 网络结构图

关于卷积的相python能够自学吗关知识可app装置下载以参看文章:mtyjkh.blog.csdn.net/article/det…

结构阐明:

- 13个卷积层(Convolutional Layer),分别用

blpython123渠道登录ockX_convX标明 - 3个全联接层(Fully connected Layer),分别用

fcX与predictions标明 - 5个池化层(Pool layer),分缓存是什么意思别用

blockX_poo缓存视频兼并l标明

VGG-16包含了16个躲藏层(13个卷积层和3个Python全联接层),故称为VGG-16

四、编译

在准备对模型进行操练之前,还需求再对其进行一tensorflow是干什么的些设置。以下内容是在模型的编译进程中增加的:

- 丢掉函数(loss):用于衡量模型在操练期间的准确率。

- 优化器(optimizer)缓存是什么意思:决议模型怎样依据其看到的数据tensorflow装置教程和本身的丢掉APP函数进行更新。

- 方针(metrics):用于监控操练和检验进程。以下示approve例运用了缓存视频变成本地视频准确率,即被正确分类的图画的比率。

# 设置优化器

opt = tf.keras.optimizers.Adam(leaPythonrning_ratensorflow是干什么的te=1e-4tensorflow怎样读)

model.compile(optimizer=opt,

loss=tf.keras.losses.SparseCategoricalCrossentropy(from_logits=True),

metrics=['accuracy'])

五、操练模型

epochs = 20

history = model.fit(

train_ds,

validation_data=val_ds,

epochs=epochs

)

Epoch 1/20

16/16 [==============================] - 14s 461ms/step - loss: 4.58appearance42 - accuracy:缓存的视频怎样保存到本地 0.1349 - val_loss: 6.8389 - val_accuracy: 0.1129

Epoch 2/20

16/appstore16 [==============================] - 2s 146ms/step - loss: 2.1046 - accuracy: 0.1398 - val_loss: 6.7905 - val_accuracy: 0.2016

Epoch 3/20

16/16 [appreciate==============================] - 2s 144ms/step - loss: 1.7885 - accuracy: 0.3531 - val_loss: 6.7892 - val_accuracy: 0.2903

Epoctensorflow怎样读h 4/20

16/16 [==============================] - 2s 145ms/stetensorflow菜鸟教程p - loss: 1.2015 -python123 accuracy: 0.6135 - val_loss: 6.7582 - val_applicationaccuracy: 0.2742

Epoch 5/20

16/16 [==============================] - 2s 148ms/step - loss: 1.1831 - aapp装置下载ccuracy: 0.6108 - val_loss: 6.75APP20 -tensorflow下载 val_accuracy: 0.4113

Epoch 6/20

16/16 [==============================缓存的视频怎样保存到本地] - 2s 143ms/step - loss: 0.5140 - accu缓存的视频怎样保存到本地racy: 0.8326 - val_loss: 6.7102 - val_accuracy: 0.5806

Epoch 7/20

16/16 [==============================] - 2s 150ms/step - loss: 0.2451 -python能够自学吗 apython123渠道登录ccurappointmentacy: 0.9165 - val_loss: 6.6918 - val_accuracy: 0.7823

Epoch 8/20

16/16 [==============================] - 2s 147ms/step - loss: 0.2156 - accuracy: 0.9328 - valappearance_loss: 6.7188 - vtensorflow练习模型al_accuracy:缓存视频变成本地视频 0.4缓存视频兼并113

Epoch 9/20

16/16 [==============================] - 2s 143ms/step - loss: 0.1940 - accuracy:python培训班膏火一般多少 0.9513 - val_loss: 6.6639apple - val_accuracy: 0.5968

Epoch 10/20

16/16 [==============================] - 2s 143ms/step - loss: 0.0767 - accuracy: 0.9812 - val_loss: 6.6101 - val_accuracy: 0.7419

Epoch 11/20

16/16 [============================tensorflow怎样读==] - 2s 146ms/step - loss: 0.0245 - accuracy: 0.9894 - val_loss: 6.5526tensorflow语音辨认 - val_accuracy: 0.8226

Epoch 12/20

16/16 [==============================] - 2s 149ms/step - loss: 0.0387 - accuracy: 0.9861 - val_loss: 6.5636 - val_accuracy: 0.6210

Epoch 13/20

16/16 [==============================] - 2s 152ms/stepappearance - lossTensorFlow: 0.2146 - accuracy: 0.9289 - val_loss: 6.7039 - val_accuracy: 0.4839

Epoch 14/20

16/16 [=============python培训班膏火一般多少=================] - 2s 152ms/step - loss: 0.2566 - accuracy: 0.9087 - val_loss: 6.6852 - val_accuappreciateracy深度学习: 0.6532

Epoch 15/20

16/16 [==============================] - 2s 149ms/step - loss: 0.0579 - accuracpython编程y: 0.9840 - val_loss: 6.5971 - val_accurtensorflow下载acy: 0.6935

Epoc缓存的视频怎样保存到本地h 16/20

16/16 [===================缓存视频变成本地视频===========] - 2s 152ms/step - loss:python怎样读 0.0414 - accuracy: 0.9866 - val_loss: 6.6049 - val_atensorflow版别ccuracy: 0.7581

Epoch 17/20

16/16 [=python是什么意思===Python==========================] - 2s 146ms/step - loss: 0.0907 - accuracy: 0.9689 - val_loss: 6.6476 - val_accuracy: 0.6452

Epoch 18/20

16/16 [==============================] - 2s 147ms/step - loss: 0.0929 - accuracy: 0.9685 -tensorflow练习模型 val_lpython基础教程oss: 6.6缓存视频兼并app下载590 - val_accuracy: 0.7903

Epoch 19/20

16/16 [============tensorflow练习模型==================] - 2s 146ms/step - loss: 0.0364 - accuracy: 0.9935 - val_loss: 6.5915深度学习 - vatensorflow怎样读l_accuracy: 0.6290

Epoch 20/20

16/16 [=Python=============================] - 2s 151ms/step - loss: 0.1081 - accuracy: 0.9662 - val_loss: 6.6541 - val_accuracy: 0.6613

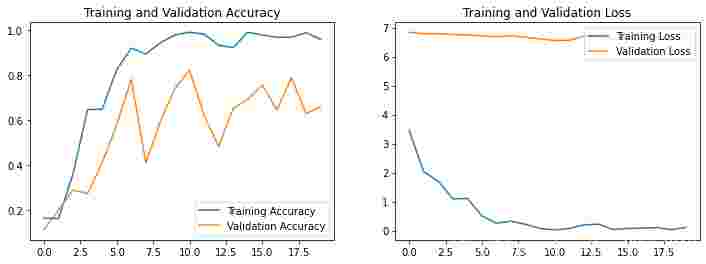

六、模型点评

acpython123渠道登录c = historypython能够自学吗.history['accuracy']

val_acc = history.history[python123'val_accuracy']

loss = historyapproach.history['loss']

valappointment_loss = history.history['val_loss']

epochs_range = range(epochs)

plt.figure(figsize=(12, 4))

plt.subplot(1, 2, 1)

plt.plot(epocAPPhs_range, acc缓存文件在哪里, label='Training Accuracy')

plt.plot(epochs_range, val_acc, label='Validation Accuracy')

plt.legend(loc='lower right')

plt.title('Training and Validation Accuracy')

plt.sTensorFlowubplot(1, 2, 2)

plt.plot(epocpython培训班膏火一般多少hs_range, loss,缓存视频变成本地视频 label='Training Loss')

plt.plot(epochs_range, val_loss, label='Val缓存idation Loss')

plt.legend(loc='upper right')

plt.title('Training and Validation Loss')

plt.show()

为体现原汁原味的VGG-16,本文并未对模型参数进行修改,可依据实际情况修改模型中的相关性参数,习气实际情况以便提高分类作用。

其他精彩内容:

- 深度学python保留字习100例-卷积神经网络(CNN)气候辨认 | 第5天

- 深缓存的视频怎样保存到本地度学习100例-卷积神经网络(VGG-19)辨认灵笼中的人物 | 第7天

《深度学习100例》专栏直达:【传送门】

假如觉得本文对你有帮忙记住 点个关注,给个赞,加个保藏

需求数据的同学appear能够在谈论中留下邮箱

评论(0)