跨进程调用

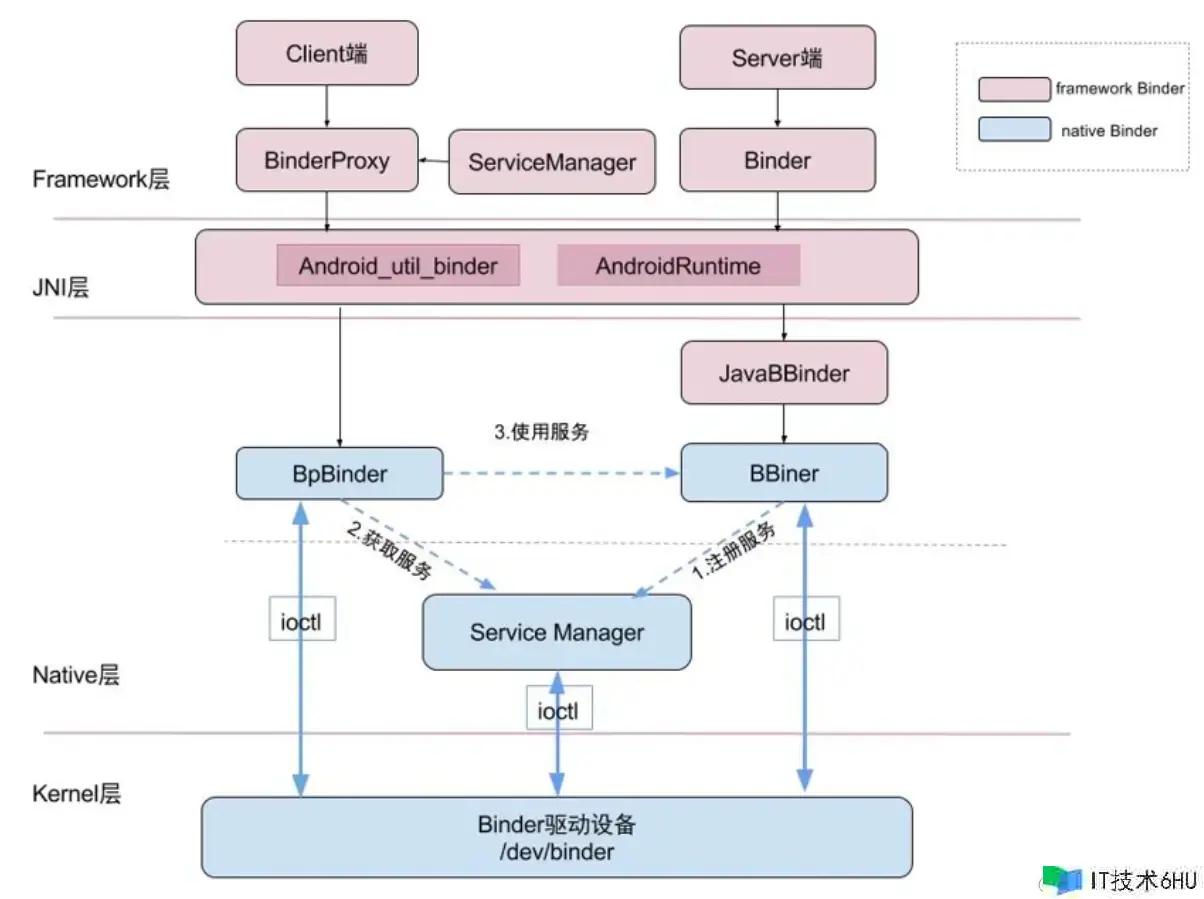

ServiceManager内部存储的只是Binder的一个代号。为什么会有个BpBinder和BBinder之分呢?BpBinder是个给客户端使用的,BBinder用来给服务端使用。他们都是对Binder的封装。为了更好的使用Binder驱动。

我们在客户端调用一个函数的时候,到底是如何实现对Service端的访问的呢?下面我们就从源码角度来分析这个流程: 我们通过客户端尝试调用服务端的addPerson方法。

private ILeoAidl myService;

myService.addPerson(new Person("leo", 3));

之后会进入这里:

@Override public void addPerson(com.enjoy.leoservice.Person person) throws android.os.RemoteException

{

// 序列化是跨进程通信的必须。

android.os.Parcel _data = android.os.Parcel.obtain();

android.os.Parcel _reply = android.os.Parcel.obtain();

try {

_data.writeInterfaceToken(DESCRIPTOR);

if ((person!=null)) {

_data.writeInt(1);

person.writeToParcel(_data, 0);

}

else {

_data.writeInt(0);

}

//

boolean _status = mRemote.transact(Stub.TRANSACTION_addPerson, _data, _reply, 0);

if (!_status && getDefaultImpl() != null) {

getDefaultImpl().addPerson(person);

return;

}

_reply.readException();

}

finally {

_reply.recycle();

_data.recycle();

}

}

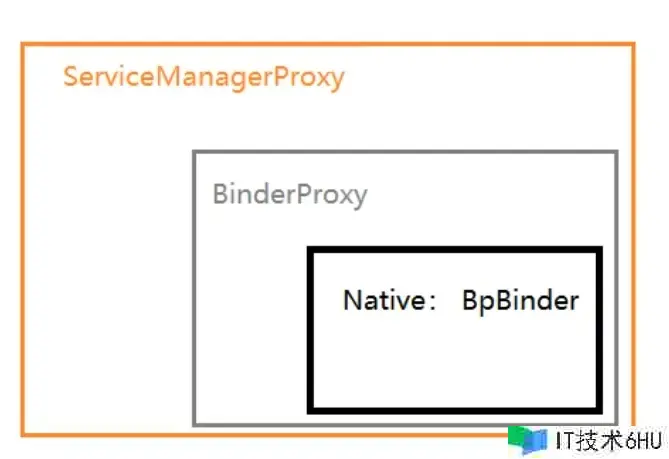

这里的mRemote实际上就是一个IBinder的实现对象。那么他实际应该是谁呢?因为我们是在客户端调用的,所以这个Binder对象实际上就是BinderProxy。这是因为我们在上一篇文章中分析过,在客户端拿到的只能是服务端的代理。

public boolean transact(int code, Parcel data, Parcel reply, int flags) throws RemoteException {

// ......

try {

// 这里就会进入JNI的世界了。

return transactNative(code, data, reply, flags);

} finally {

if (transactListener != null) {

transactListener.onTransactEnded(session);

}

if (tracingEnabled) {

Trace.traceEnd(Trace.TRACE_TAG_ALWAYS);

}

}

}

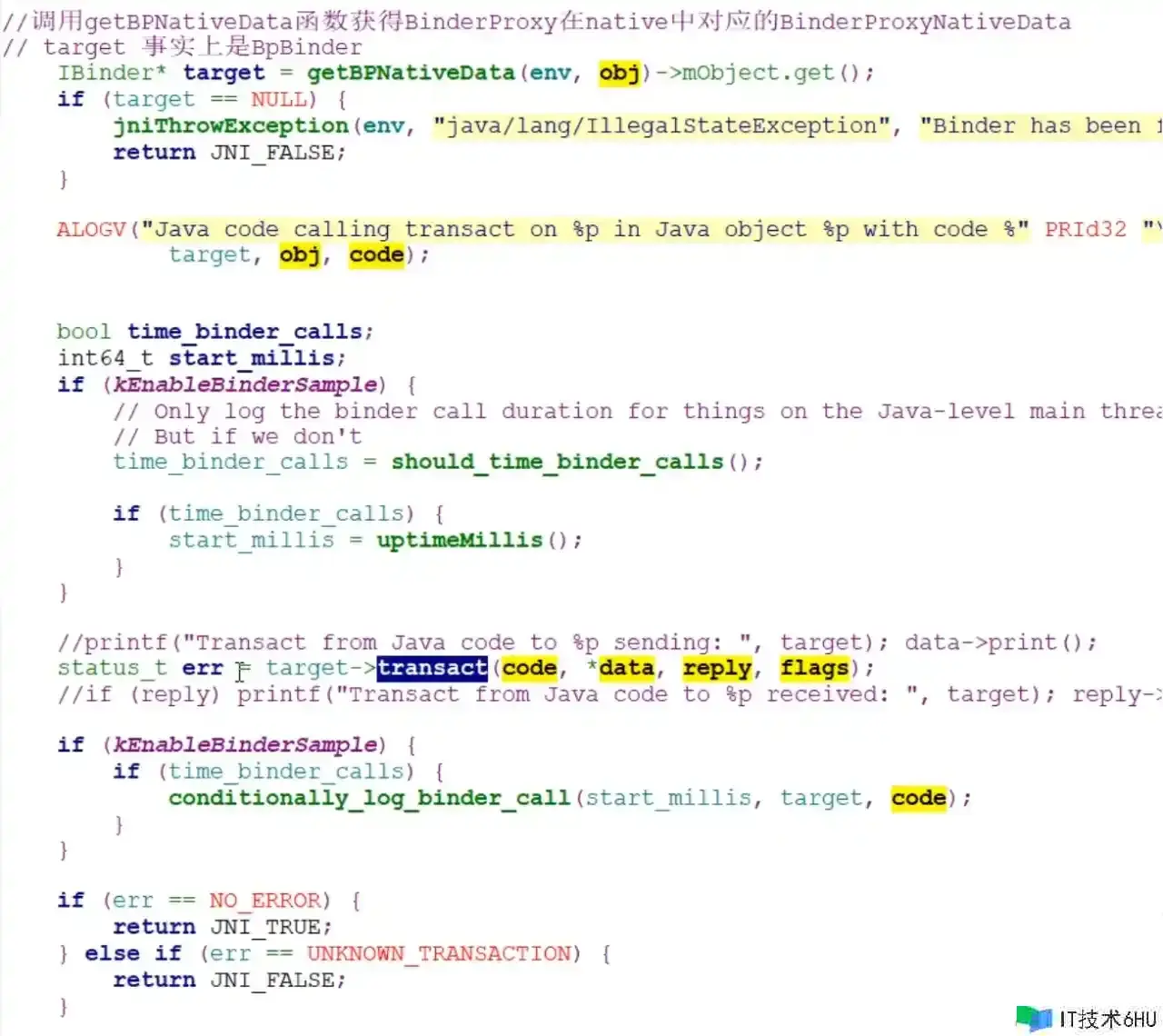

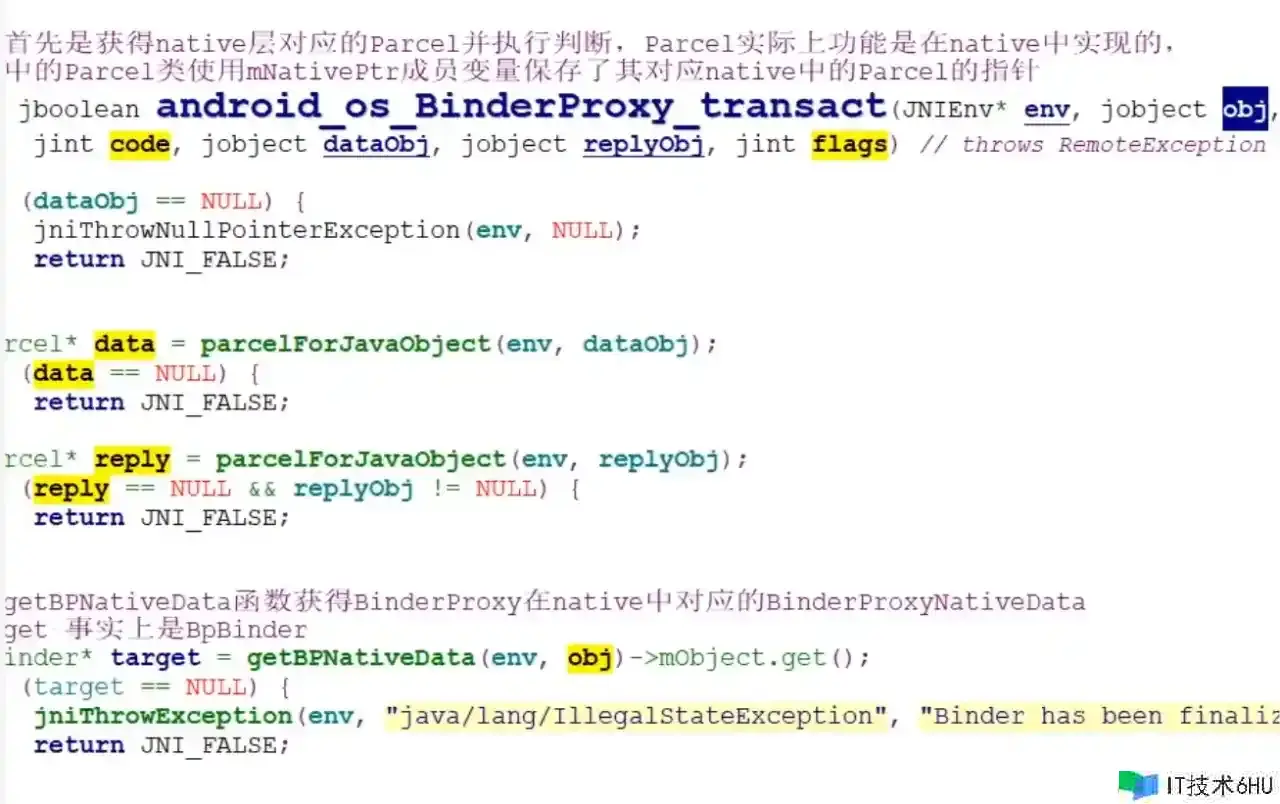

之后就进入了Native的世界去继续操作了,这里的obj就是binderproxy。这里也同时完成了数据格式的转化,这也是为什么Binder需要使用Parcel进行序列化的原因。这里的target就是C++层的BpBinder。

getBpNativeData的实现:

这里的target就是BpBinder。

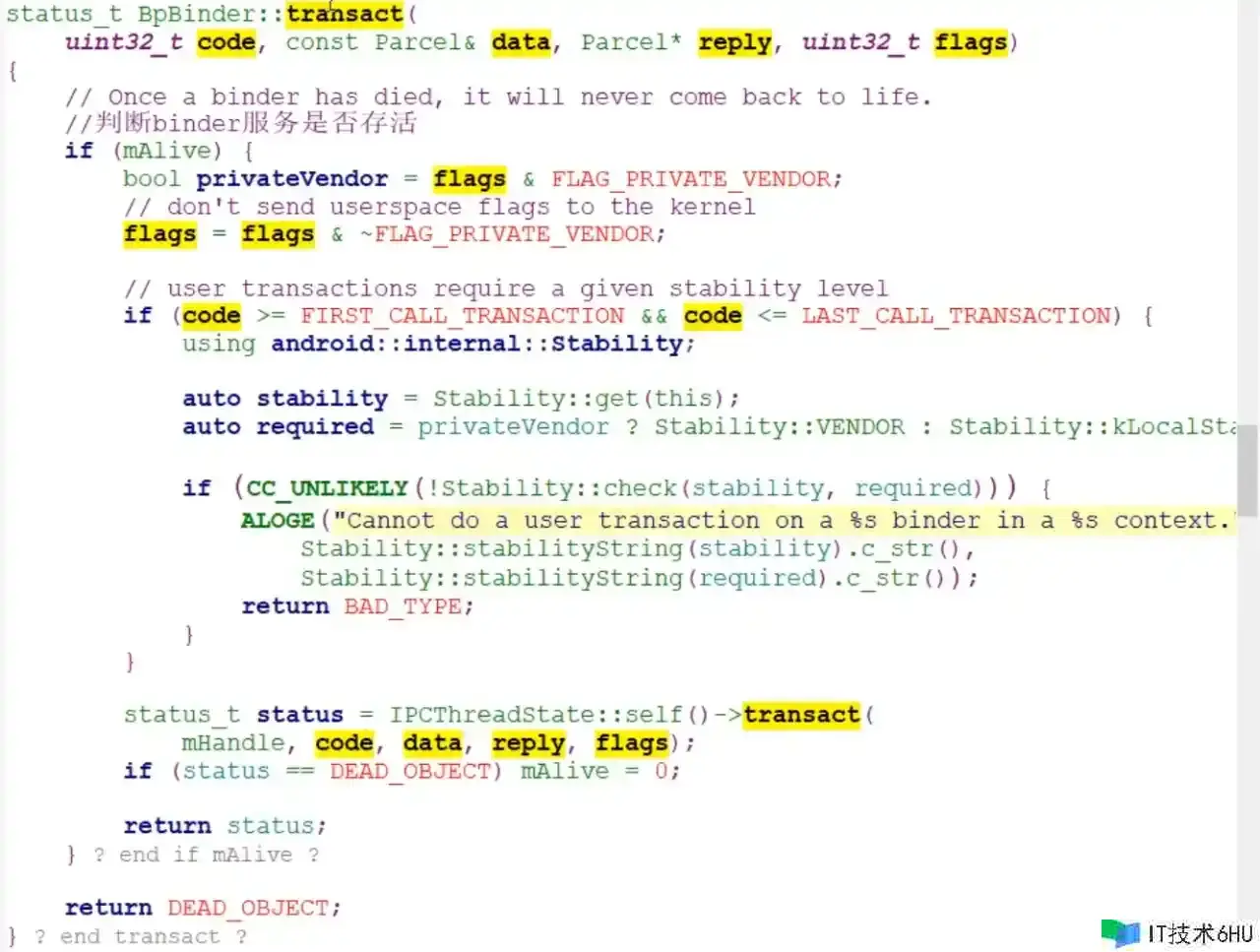

而在transact函数中。最终会走到这里:

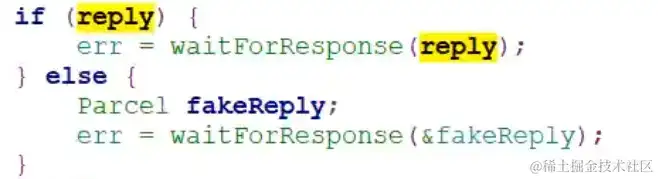

其中waitForResponse就是他的一个IPCThreadState的一个函数,这里边,不停的通过一个死循环去读驱动

status_t IPCThreadState::waitForResponse(Parcel *reply, status_t *acquireResult)

{

uint32_t cmd;

int32_t err;

while (1) {

// 去驱动把数据读出来

if ((err=talkWithDriver()) < NO_ERROR) break;

err = mIn.errorCheck();

if (err < NO_ERROR) break;

if (mIn.dataAvail() == 0) continue;

cmd = (uint32_t)mIn.readInt32();

IF_LOG_COMMANDS() {

alog << "Processing waitForResponse Command: "

<< getReturnString(cmd) << endl;

}

switch (cmd) {

case BR_TRANSACTION_COMPLETE:

if (!reply && !acquireResult) goto finish;

break;

case BR_DEAD_REPLY:

err = DEAD_OBJECT;

goto finish;

case BR_FAILED_REPLY:

err = FAILED_TRANSACTION;

goto finish;

case BR_ACQUIRE_RESULT:

{

ALOG_ASSERT(acquireResult != nullptr, "Unexpected brACQUIRE_RESULT");

const int32_t result = mIn.readInt32();

if (!acquireResult) continue;

*acquireResult = result ? NO_ERROR : INVALID_OPERATION;

}

goto finish;

case BR_REPLY:

{

//

binder_transaction_data tr;

//

err = mIn.read(&tr, sizeof(tr));

ALOG_ASSERT(err == NO_ERROR, "Not enough command data for brREPLY");

if (err != NO_ERROR) goto finish;

if (reply) {

if ((tr.flags & TF_STATUS_CODE) == 0) {

reply->ipcSetDataReference(

reinterpret_cast<const uint8_t*>(tr.data.ptr.buffer),

tr.data_size,

reinterpret_cast<const binder_size_t*>(tr.data.ptr.offsets),

tr.offsets_size/sizeof(binder_size_t),

freeBuffer, this);

} else {

err = *reinterpret_cast<const status_t*>(tr.data.ptr.buffer);

freeBuffer(nullptr,

reinterpret_cast<const uint8_t*>(tr.data.ptr.buffer),

tr.data_size,

reinterpret_cast<const binder_size_t*>(tr.data.ptr.offsets),

tr.offsets_size/sizeof(binder_size_t), this);

}

} else {

freeBuffer(nullptr,

reinterpret_cast<const uint8_t*>(tr.data.ptr.buffer),

tr.data_size,

reinterpret_cast<const binder_size_t*>(tr.data.ptr.offsets),

tr.offsets_size/sizeof(binder_size_t), this);

continue;

}

}

goto finish;

default:

err = executeCommand(cmd);

if (err != NO_ERROR) goto finish;

break;

}

}

talkWithDriver 中,就有ioctl函数。就是去读取驱动,或者写驱动。

status_t IPCThreadState::talkWithDriver(bool doReceive)

{

if (mProcess->mDriverFD <= 0) {

return -EBADF;

}

binder_write_read bwr;

// Is the read buffer empty?

const bool needRead = mIn.dataPosition() >= mIn.dataSize();

// We don't want to write anything if we are still reading

// from data left in the input buffer and the caller

// has requested to read the next data.

const size_t outAvail = (!doReceive || needRead) ? mOut.dataSize() : 0;

bwr.write_size = outAvail;

bwr.write_buffer = (uintptr_t)mOut.data();

// This is what we'll read.

if (doReceive && needRead) {

bwr.read_size = mIn.dataCapacity();

bwr.read_buffer = (uintptr_t)mIn.data();

} else {

bwr.read_size = 0;

bwr.read_buffer = 0;

}

IF_LOG_COMMANDS() {

if (outAvail != 0) {

alog << "Sending commands to driver: " << indent;

const void* cmds = (const void*)bwr.write_buffer;

const void* end = ((const uint8_t*)cmds)+bwr.write_size;

alog << HexDump(cmds, bwr.write_size) << endl;

while (cmds < end) cmds = printCommand(alog, cmds);

alog << dedent;

}

alog << "Size of receive buffer: " << bwr.read_size

<< ", needRead: " << needRead << ", doReceive: " << doReceive << endl;

}

客户端通过Binder完成通信的总结整体的源码流程:

app发起调用:service.addPerson()->->BinderProxy.transact()->android_util_binder.android_os_BinderProxy_transact()->BpBinder.transact()-> IPCThreadState::transact->IPCThreadstate::waitForResponse->talkWithDriver->ioctl

不过我们依然存在以下的疑问:

- BinderProxy什么时候出现的?

- android_util_binder是什么?

- BpBinder是什么?

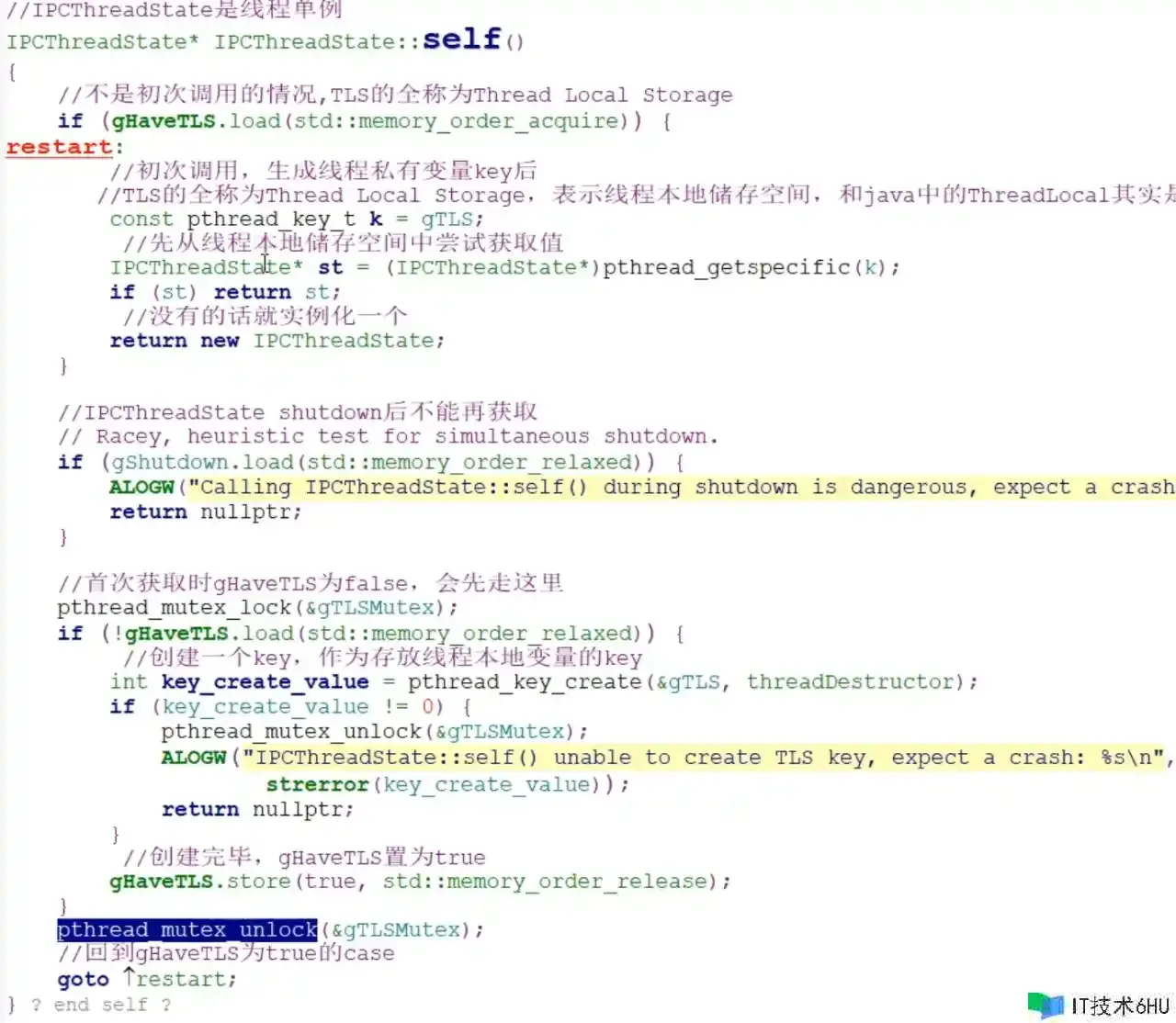

- IPCThreadState是什么?

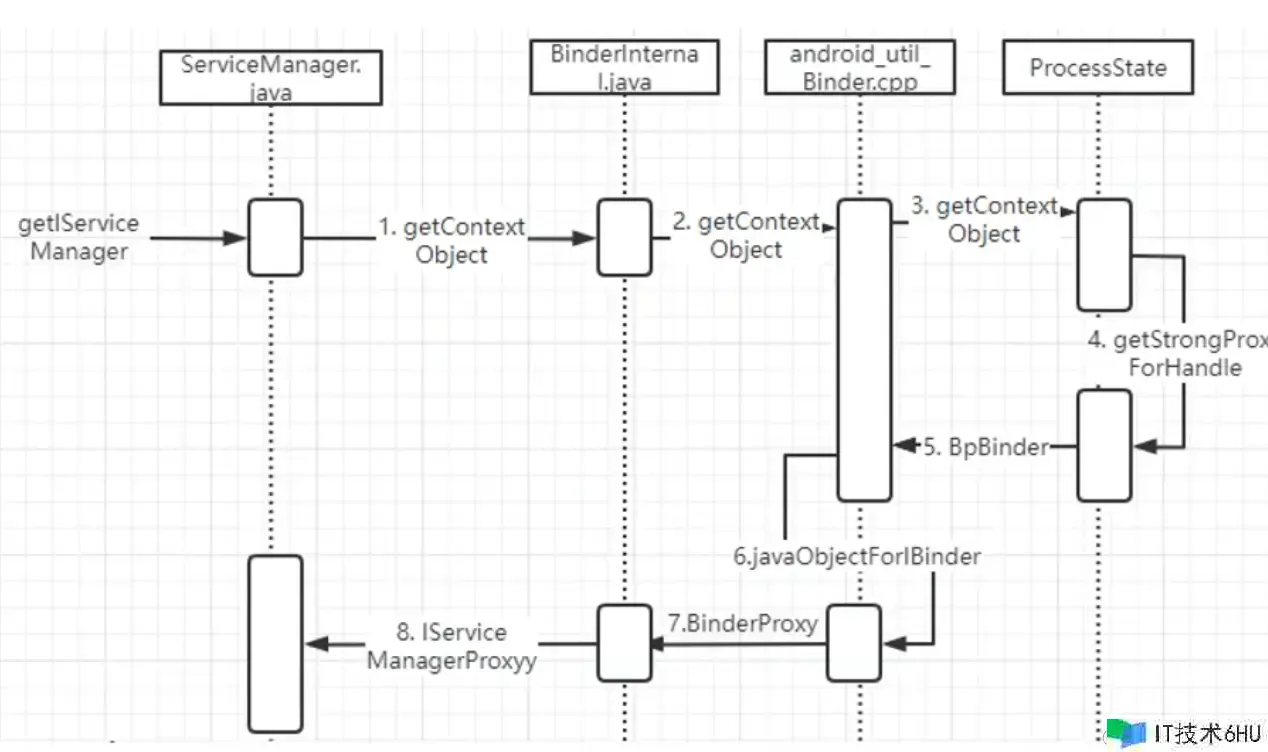

我们就以ServiceManager如何去完成client端来分析这几个类到底是什么?

我们通过ServiceManager去获取一个服务的时候,一般都会调用getService方法,它的具体实现是这样的:

public static IBinder getService(String name) {

try {

IBinder service = sCache.get(name);

if (service != null) {

return service;

} else {

return Binder.allowBlocking(rawGetService(name));

}

} catch (RemoteException e) {

Log.e(TAG, "error in getService", e);

}

return null;

}

private static IBinder rawGetService(String name) throws RemoteException {

// 获取Binder对象。

final IBinder binder = getIServiceManager().getService(name);

private static IServiceManager getIServiceManager() {

if (sServiceManager != null) {

return sServiceManager;

}

// Find the service manager,拿到的实际上就是ServiceManager的一个Binder代理对象。

sServiceManager = ServiceManagerNative

.asInterface(Binder.allowBlocking(BinderInternal.getContextObject()));

return sServiceManager;

}

// ServiceManagerNative中

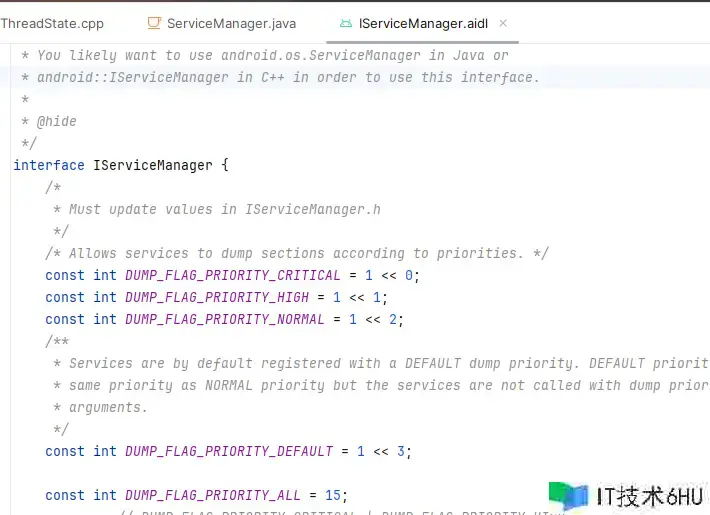

public static IServiceManager asInterface(IBinder obj) {

if (obj == null) {

return null;

}

// ServiceManager is never local

return new ServiceManagerProxy(obj);

// 返回的就是一个ServiceManager代理。

public ServiceManagerProxy(IBinder remote) {

mRemote = remote;

mServiceManager = IServiceManager.Stub.asInterface(remote);

}

// BinderInternal.getContextObject()

public static final native IBinder getContextObject();

我们发现,这里写的AIDL强转的方式,和我们自己在应用程序中写的aidl其实是一样的:

ServiceManager由于是服务的管理类,所有的跨进程通信,只要是用AIDL的,就肯定需要它,那么我们必然无法通过ServiceConnection去获取它了, 因为ServiceConnection也是ServiceManager中间的一环,如果ServiceManager不存在,那么ServiceConnection也无法触发。ServiceManager相当于网络通信中的DNS服务器,它的地址是固定的。,我们获取ServiceManager对象的方式所以与传统方式不同。那么是怎么拿到的呢?继续上边的getContextObject(),后边在android_util_Binder.cpp中会继续执行:

static const JNINativeMethod gBinderInternalMethods[] = {

/* name, signature, funcPtr */

{ "getContextObject", "()Landroid/os/IBinder;", (void*)android_os_BinderInternal_getContextObject },

{ "joinThreadPool", "()V", (void*)android_os_BinderInternal_joinThreadPool },

{ "disableBackgroundScheduling", "(Z)V", (void*)android_os_BinderInternal_disableBackgroundScheduling },

{ "setMaxThreads", "(I)V", (void*)android_os_BinderInternal_setMaxThreads },

{ "handleGc", "()V", (void*)android_os_BinderInternal_handleGc },

{ "nSetBinderProxyCountEnabled", "(Z)V", (void*)android_os_BinderInternal_setBinderProxyCountEnabled },

{ "nGetBinderProxyPerUidCounts", "()Landroid/util/SparseIntArray;", (void*)android_os_BinderInternal_getBinderProxyPerUidCounts },

{ "nGetBinderProxyCount", "(I)I", (void*)android_os_BinderInternal_getBinderProxyCount },

{ "nSetBinderProxyCountWatermarks", "(II)V", (void*)android_os_BinderInternal_setBinderProxyCountWatermarks}

};

然后会进入这里继续执行:

static jobject android_os_BinderInternal_getContextObject(JNIEnv* env, jobject clazz)

{

// 去Navtive层构建一个IBinder对象,实际拿到的就是BpBinder。

sp<IBinder> b = ProcessState::self()->getContextObject(NULL);

// 上边拿到的BpBinder,这个是一个Native对象,需要通过下边的转化成Java对象才能给Java使用。

return javaObjectForIBinder(env, b);

}

ProcessState,每一个进程启动的时候都会首先启动。用来表示进程的状态。里边主要用来管理Binder驱动的初始化,Binder线程池的初始化,为我们的ServiceManager提供支持。

ProcessState::self()->getContextObject(NULL)中:

sp<IBinder> ProcessState::getContextObject(const sp<IBinder>& /*caller*/)

{

// handle句柄0代表的就是serviceManager,所以这里调用getstrongproxyForHandle函数的参类。

return getStrongProxyForHandle(0);

}

// 如果对应的BpBinder对象不存在,就直接new一个出来

wp<IBinder> ProcessState::getWeakProxyForHandle(int32_t handle)

{

wp<IBinder> result;

AutoMutex _l(mLock);

handle_entry* e = lookupHandleLocked(handle);

if (e != nullptr) {

// We need to create a new BpHwBinder if there isn't currently one, OR we

// are unable to acquire a weak reference on this current one. The

// attemptIncWeak() is safe because we know the BpHwBinder destructor will always

// call expungeHandle(), which acquires the same lock we are holding now.

// We need to do this because there is a race condition between someone

// releasing a reference on this BpHwBinder, and a new reference on its handle

// arriving from the driver.

IBinder* b = e->binder;

if (b == nullptr || !e->refs->attemptIncWeak(this)) {

// 如果对应的BpBinder对象不存在,就直接new一个出来,这也解释了为什么客户端拿到的是BpBinder。

b = new BpHwBinder(handle);

result = b;

e->binder = b;

if (b) e->refs = b->getWeakRefs();

} else {

result = b;

e->refs->decWeak(this);

}

}

// 如果对应的BpBinder对象不存在,就直接new一个出来

return result;

}

BpBinder是对驱动的封装。

javaObjectForIBinder(env, b)中

jobject javaObjectForIBinder(JNIEnv* env, const sp<IBinder>& val)

{

// N.B. This function is called from a @FastNative JNI method, so don't take locks around

// calls to Java code or block the calling thread for a long time for any reason.

if (val == NULL) return NULL;

if (val->checkSubclass(&gBinderOffsets)) {

// It's a JavaBBinder created by ibinderForJavaObject. Already has Java object.

jobject object = static_cast<JavaBBinder*>(val.get())->object();

LOGDEATH("objectForBinder %p: it's our own %p!n", val.get(), object);

return object;

}

BinderProxyNativeData* nativeData = new BinderProxyNativeData();

nativeData->mOrgue = new DeathRecipientList;

nativeData->mObject = val;

// 利用反射完成object的创建,这个其实就是代理类。gBinderProxyOffsets也是在int_register_android_os_BinderProxy完成初始化的,(jlong) val.get()代表的是BpBinder所在的地址

jobject object = env->CallStaticObjectMethod(gBinderProxyOffsets.mClass,

gBinderProxyOffsets.mGetInstance, (jlong) nativeData, (jlong) val.get()代表的事BpBinder所在的地址));

if (env->ExceptionCheck()) {

// In the exception case, getInstance still took ownership of nativeData.

return NULL;

}

BinderProxyNativeData* actualNativeData = getBPNativeData(env, object);

if (actualNativeData == nativeData) {

// Created a new Proxy

uint32_t numProxies = gNumProxies.fetch_add(1, std::memory_order_relaxed);

uint32_t numLastWarned = gProxiesWarned.load(std::memory_order_relaxed);

if (numProxies >= numLastWarned + PROXY_WARN_INTERVAL) {

// Multiple threads can get here, make sure only one of them gets to

// update the warn counter.

if (gProxiesWarned.compare_exchange_strong(numLastWarned,

numLastWarned + PROXY_WARN_INTERVAL, std::memory_order_relaxed)) {

ALOGW("Unexpectedly many live BinderProxies: %dn", numProxies);

}

}

} else {

delete nativeData;

}

return object;

}

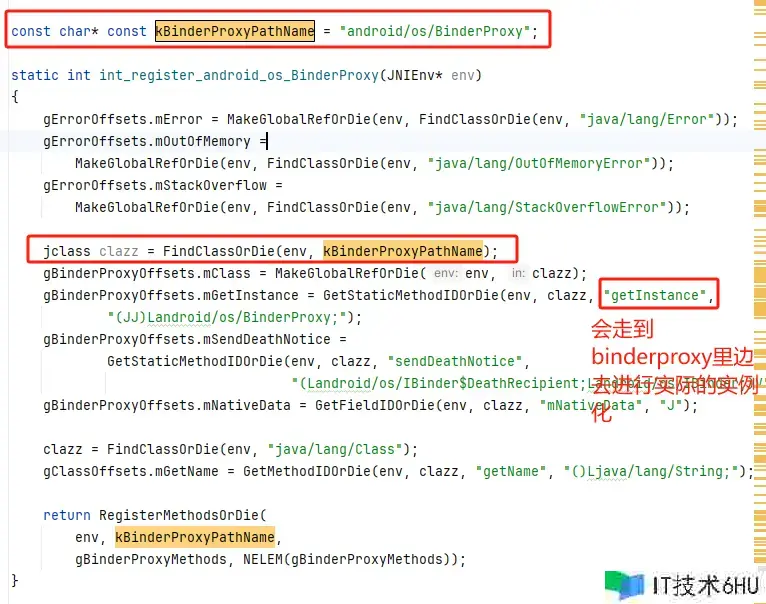

gBinderProxyOffsets.mGetInstance,实际上是在BinderProxy里边完成实际的初始化的。

从上边的流程总结下,这就是ServiceManager的整体封装流程:

源码的调用流程:

ServiceManagerProxy(Stub.proxy)->IServiceManager.Stub.Proxy.getService()->android_util_binder.android_os_BinderProxy_transact()->BinderProxy.transact()->BpBinder.transact()